Artificial Intelligence (AI) is no longer confined to powerful data centers or cloud platforms. With the rise of Edge AI, machine learning (ML) models can now run directly on mobile devices, IoT systems, and embedded hardware, enabling real-time intelligence closer to where data is generated.

Edge AI has become a game-changer for industries like healthcare, manufacturing, automotive, and consumer electronics by offering faster decisions, reduced latency, and enhanced privacy. In this blog, we’ll explore what Edge AI is, how it works, its benefits, challenges, and real-world applications.

What is Edge AI?

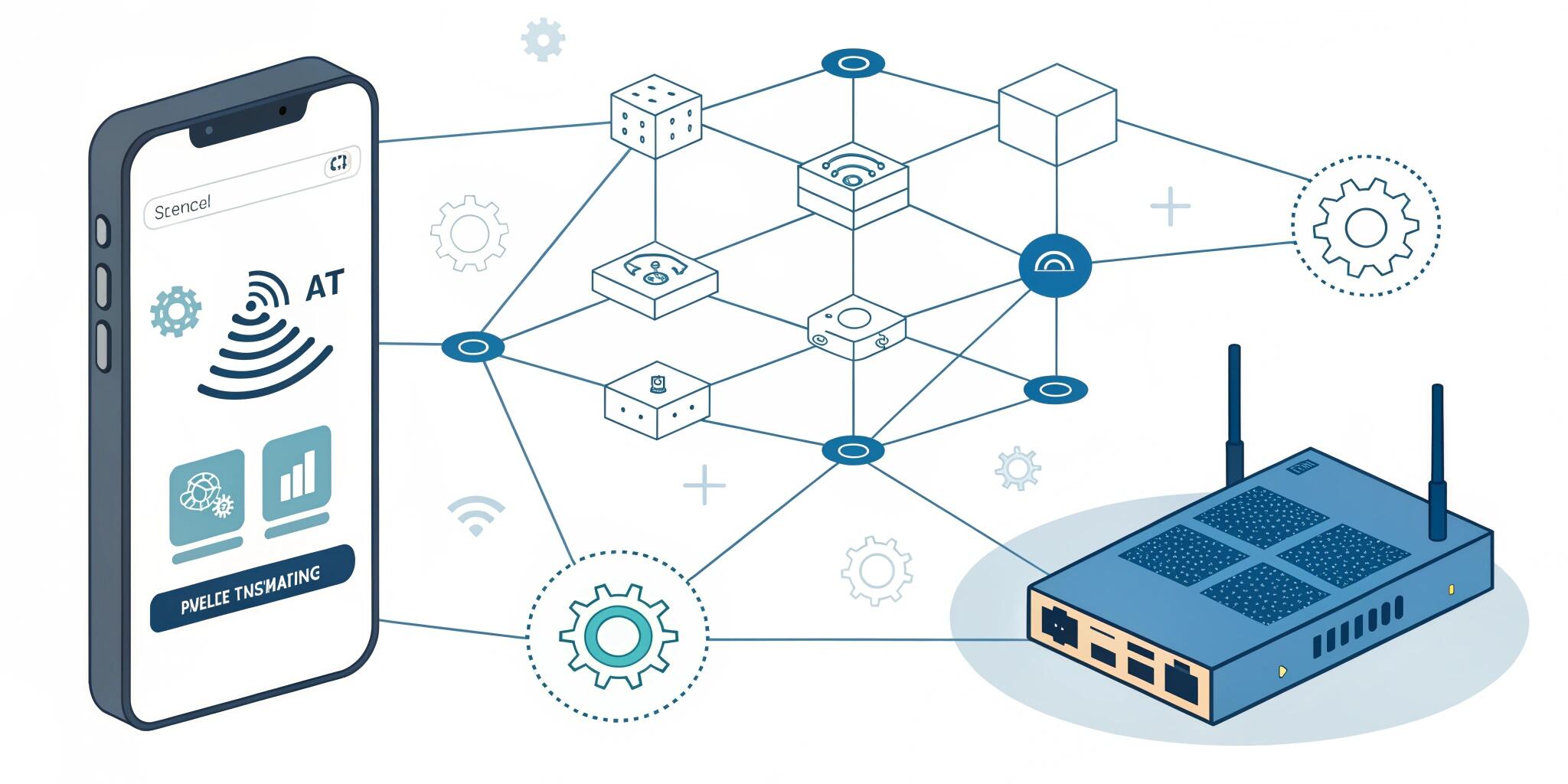

Edge AI refers to the deployment of machine learning models directly on edge devices such as smartphones, IoT sensors, cameras, wearables, or embedded systems. Unlike cloud-based AI, where data must be sent to remote servers for processing, edge AI processes data locally on the device itself.

This shift allows for low-latency, real-time decision-making without constant reliance on internet connectivity.

How Edge AI Works

- Model Training: Typically, ML models are trained in the cloud or on-premises using large datasets.

- Model Optimization: To run on resource-constrained devices, models are compressed or optimized using techniques such as quantization, pruning, or knowledge distillation.

- On-Device Inference: The optimized model is deployed to mobile or IoT devices, where it processes incoming data and makes predictions in real time.

- Edge-Cloud Collaboration: In some cases, edge devices perform initial processing while heavy workloads are sent to the cloud for refinement.

Benefits of Edge AI

- Low Latency

- Immediate responses without waiting for data transfer to the cloud.

- Critical for applications like autonomous driving or real-time healthcare monitoring.

- Improved Privacy & Security

- Sensitive data (e.g., health records, images) remains on-device.

- Reduces exposure to data breaches and compliance risks.

- Reduced Bandwidth Usage

- Only processed insights (not raw data) are sent to the cloud, lowering network strain.

- Offline Functionality

- Works even in low-connectivity or remote environments (e.g., rural IoT deployments).

- Energy Efficiency

- Optimized edge AI models consume less power than constant cloud communication.

Challenges of Running ML Models on Edge Devices

- Limited Computational Power

- Mobile and IoT devices have restricted CPU/GPU resources compared to cloud servers.

- Model Size Constraints

- Large models (e.g., deep neural networks) must be optimized to fit device memory.

- Battery Consumption

- Running inference locally can drain battery life if not optimized.

- Hardware Diversity

- IoT ecosystems involve varied hardware architectures, making deployment complex.

- Model Updates

- Regular updates and improvements are harder to roll out across millions of devices.

Tools and Frameworks for Edge AI

- TensorFlow Lite – Lightweight version of TensorFlow for mobile and IoT devices.

- PyTorch Mobile – Optimized PyTorch models for Android/iOS apps.

- ONNX Runtime Mobile – Open Neural Network Exchange format for model portability.

- Core ML – Apple’s framework for deploying AI models on iOS devices.

- NVIDIA Jetson – Hardware platform for running edge AI on robotics and IoT devices.

- Qualcomm AI Engine – Accelerates AI workloads on Snapdragon processors.

Real-World Applications of Edge AI

- Healthcare

- Wearables tracking vitals in real time.

- On-device anomaly detection for heart rate or glucose levels.

- Autonomous Vehicles

- Processing data from cameras and LiDAR sensors locally for safe driving decisions.

- Smart Homes & IoT

- AI-enabled security cameras performing facial recognition without cloud dependency.

- Smart speakers processing voice commands locally for faster responses.

- Retail

- Edge AI-powered smart shelves tracking product stock in real time.

- Personalized promotions based on in-store customer behavior.

- Manufacturing

- Predictive maintenance using IoT sensors.

- Real-time quality inspection on assembly lines.

The Future of Edge AI

As hardware continues to improve and 5G networks expand, the adoption of Edge AI will accelerate. We can expect:

- More lightweight deep learning models designed for edge devices.

- Federated learning, where models learn collaboratively without sharing raw data.

- Greater integration of AI chips (e.g., Google Edge TPU, Apple Neural Engine) for on-device intelligence.

Edge AI will play a critical role in the future of autonomous systems, connected devices, and real-time analytics, reshaping how we interact with technology.

Conclusion

Edge AI bridges the gap between cloud intelligence and on-device processing, enabling real-time, private, and efficient AI applications. While challenges like computational limits and battery usage remain, advances in model optimization and hardware acceleration are making Edge AI a reality across industries.

For businesses and developers, adopting Edge AI offers a competitive advantage—delivering faster, smarter, and more secure AI experiences.