As artificial intelligence (AI) becomes deeply integrated into decision-making systems—from healthcare diagnostics to financial approvals—understanding how AI reaches conclusions has become increasingly important. Enter Explainable AI (XAI), a framework that aims to make AI models transparent, interpretable, and accountable.

In an era where algorithms influence critical human outcomes, trust and transparency are no longer optional—they are essential. Explainable AI empowers users to understand the logic behind predictions, ensuring that AI remains ethical, unbiased, and human-centered.

What Is Explainable AI (XAI)?

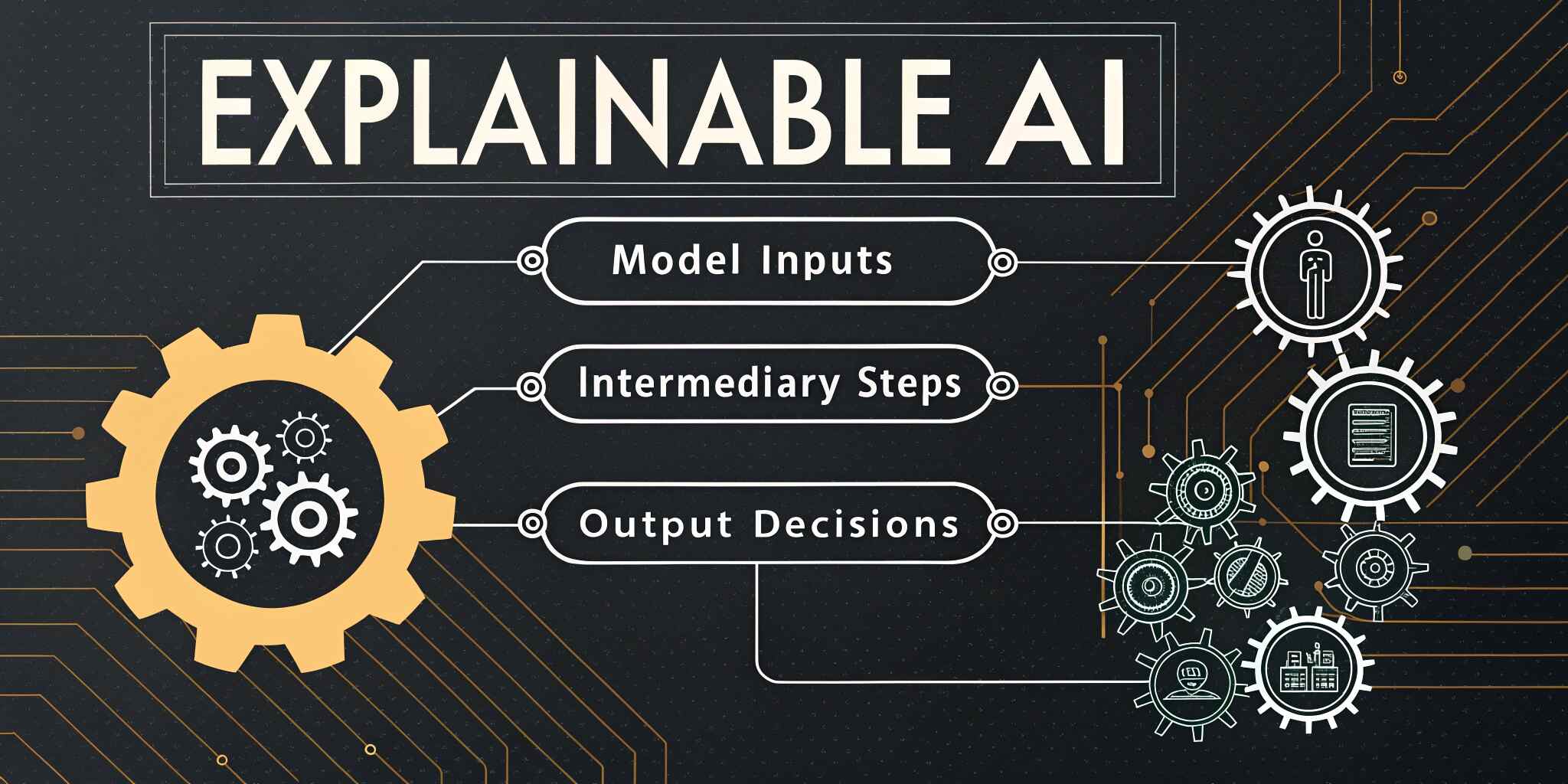

Explainable AI refers to a set of methods and processes that make the outcomes of AI systems understandable to humans. It goes beyond generating predictions—it provides reasoning behind them.

For example, in a loan approval system, instead of simply rejecting an applicant, an explainable model can highlight which factors (such as credit score, income, or payment history) influenced the decision.

XAI thus bridges the gap between black-box AI models (which are often opaque) and human interpretability, ensuring transparency without sacrificing performance.

Why Transparency in AI Matters

1. Building Trust and Adoption

Users are more likely to embrace AI-driven systems when they can understand their reasoning. Transparent models inspire confidence in automated decision-making, especially in sensitive sectors like healthcare and finance.

2. Ensuring Ethical AI Practices

AI systems can unintentionally perpetuate bias if trained on skewed data. Explainable AI helps identify and mitigate such biases, ensuring fairness and accountability.

3. Supporting Regulatory Compliance

Global regulations such as the EU’s GDPR and emerging AI Act emphasize the right to explanation—meaning organizations must justify automated decisions. XAI helps meet these compliance standards.

4. Enhancing Model Debugging and Performance

Explainability allows developers to understand why models fail or behave unexpectedly, making it easier to improve accuracy and reliability.

5. Encouraging Human-AI Collaboration

Transparent AI systems enable humans to validate, question, and refine model outputs—fostering a partnership between human intelligence and machine learning.

How Explainable AI Works

Explainability can be achieved through two primary approaches:

a. Intrinsic Explainability – Designing models that are inherently interpretable, such as decision trees or linear regressions.

b. Post-Hoc Explainability – Applying tools and techniques (like LIME, SHAP, or Grad-CAM) to interpret complex models like deep neural networks after training.

These tools visualize feature importance, highlight influential variables, and provide human-readable explanations of model behavior.

Applications of Explainable AI Across Industries

1. Healthcare

In medical imaging or diagnosis, XAI clarifies which features (e.g., cell patterns or tissue density) led to a result—helping doctors trust AI recommendations.

2. Finance

Banks use XAI to explain credit risk models and justify loan decisions, ensuring fairness and compliance.

3. Human Resources

Recruitment systems that use AI to screen candidates can explain selection or rejection reasons, reducing discrimination risks.

4. Autonomous Systems

For self-driving cars, explainability helps identify decision pathways behind navigation choices, improving safety and accountability.

5. Legal and Governance

Government and legal systems can use XAI to ensure fairness in automated sentencing, audits, and predictive policing systems.

Challenges in Implementing Explainable AI

Despite its benefits, Explainable AI faces several hurdles:

- Complexity vs. Accuracy Trade-Off: Simpler models are easier to explain but may lack predictive accuracy.

- Lack of Standardization: There’s no universal framework for AI explainability yet.

- Interpreting Deep Learning Models: Neural networks are inherently complex, making full transparency difficult.

- Balancing Privacy and Transparency: Explaining models without exposing sensitive data remains a challenge.

Best Practices for Building Explainable AI

- Adopt a Human-Centered Design Approach – Explanations should be meaningful to end users, not just data scientists.

- Use Interpretable Models Where Possible – Prefer decision trees or logistic regression for transparent decisions.

- Leverage Explainability Tools – Utilize LIME, SHAP, and counterfactual explanations to improve interpretability.

- Ensure Data Fairness – Regularly audit training data for bias and ensure diversity in datasets.

- Integrate Ethical Review Processes – Include ethical oversight in AI lifecycle management to ensure responsible deployment.

The Future of Explainable AI

As AI continues to power critical sectors, explainability will become a non-negotiable standard. Future advancements in AI transparency will likely focus on combining performance with interpretability through hybrid models that balance accuracy and understanding.

With the rise of regulatory frameworks, ethical AI initiatives, and AI governance boards, Explainable AI will define how organizations deploy trustworthy and human-aligned intelligent systems.

Conclusion

Explainable AI is more than a technical requirement—it’s a moral and social responsibility. By prioritizing transparency, fairness, and accountability, organizations can foster greater trust between humans and machines.

In 2025 and beyond, the true success of AI will not only be measured by what it can do but also by how well we can understand and trust what it does.