As artificial intelligence becomes central to modern applications, developers are constantly looking for ways to improve the performance of large language models (LLMs). Two common techniques used for optimizing AI responses are prompt engineering and fine-tuning.

Both methods help tailor AI models for specific tasks, but they differ significantly in cost, complexity, and long-term scalability. Understanding their advantages and limitations can help businesses choose the right strategy for their AI-powered applications.

What is Prompt Engineering?

Prompt engineering involves designing structured prompts or instructions to guide an AI model toward producing the desired output. Instead of modifying the model itself, developers carefully craft inputs to influence how the model responds.

For example, instead of asking an AI model:

“Explain cloud computing.”

A prompt engineer might structure it like this:

“Explain cloud computing in simple terms for a beginner. Include three benefits and one real-world example.”

This approach improves the clarity and relevance of AI responses without changing the model’s internal parameters.

Advantages of Prompt Engineering

1. Low Cost Implementation

Prompt engineering does not require model training or additional computing resources. Developers only need to design and test prompts.

2. Faster Development

Changes can be implemented instantly by adjusting prompts. There is no need to retrain the model.

3. Flexibility

Prompt engineering allows developers to quickly experiment with different instructions and formats to achieve the desired results.

4. No Infrastructure Required

Since the model itself is not modified, companies can use existing APIs without building complex training pipelines.

Limitations of Prompt Engineering

Despite its advantages, prompt engineering has certain limitations:

- Performance can vary depending on prompt wording.

- It may require constant testing and refinement.

- Complex domain-specific tasks may still produce inconsistent results.

What is Fine-Tuning?

Fine-tuning involves retraining an existing AI model using a specialized dataset. Instead of relying solely on prompts, the model learns from additional examples tailored to a specific task.

For example, a company building a legal AI assistant might fine-tune a language model using thousands of legal documents and case summaries. This helps the model generate more accurate legal responses.

Advantages of Fine-Tuning

1. Higher Accuracy for Specialized Tasks

Fine-tuned models perform significantly better in domain-specific applications such as healthcare, finance, or customer support.

2. Consistent Outputs

Since the model learns from structured training data, its responses become more predictable and reliable.

3. Reduced Prompt Complexity

Developers do not need extremely detailed prompts once the model is fine-tuned for a particular use case.

4. Better Brand or Style Alignment

Companies can train models to respond in a specific tone, format, or writing style.

Limitations of Fine-Tuning

Fine-tuning also introduces several challenges:

- Higher infrastructure and training costs

- Need for high-quality datasets

- Longer development cycles

- Continuous retraining for updates

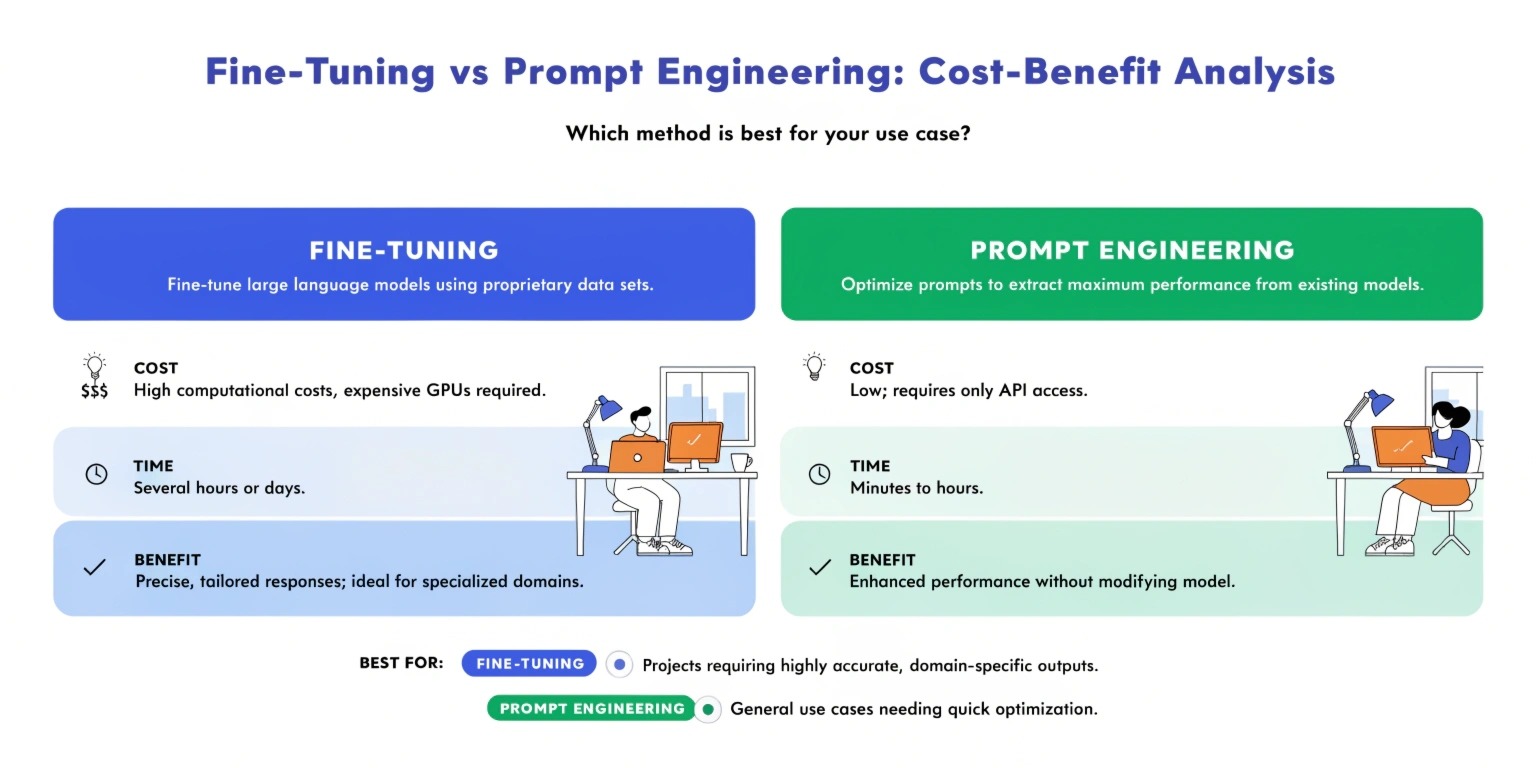

Cost Comparison

When evaluating prompt engineering vs fine-tuning, cost is often the deciding factor.

Prompt Engineering Costs

Prompt engineering is generally inexpensive because it does not require training resources. The main investment involves:

- Developer time

- Testing and experimentation

- Prompt management tools

For startups and small businesses, prompt engineering offers an affordable entry point into AI.

Fine-Tuning Costs

Fine-tuning requires significantly more investment, including:

- Data collection and preparation

- GPU computing resources

- Machine learning expertise

- Model monitoring and retraining

However, for companies that rely heavily on AI automation, the long-term benefits may justify the cost.

Performance and Scalability

Prompt Engineering Scalability

Prompt engineering works well for general tasks such as:

- Content generation

- Chatbots

- Coding assistance

- Marketing automation

However, as applications become more complex, maintaining large prompt libraries can become difficult.

Fine-Tuning Scalability

Fine-tuning is better suited for applications that require deep domain knowledge, such as:

- Medical AI assistants

- Financial analysis tools

- Legal document processing

- Technical customer support

In these scenarios, fine-tuned models provide more reliable performance.

When to Use Prompt Engineering

Prompt engineering is ideal when:

- You need quick AI implementation

- The application handles general tasks

- Budgets are limited

- Frequent experimentation is required

Many companies start with prompt engineering before considering fine-tuning.

When to Use Fine-Tuning

Fine-tuning is the better option when:

- High accuracy is required

- The AI application is domain-specific

- The system will operate at large scale

- Consistency is critical

Enterprises building production-level AI systems often combine fine-tuning with prompt engineering for optimal results.

Final Thoughts

Prompt engineering and fine-tuning are not competing techniques—they are complementary strategies for improving AI performance.

Prompt engineering offers a fast, low-cost solution for many AI use cases, while fine-tuning provides deeper customization and higher accuracy for specialized applications.

The best approach depends on your project goals, available resources, and the level of performance required. By carefully evaluating costs, scalability, and development complexity, businesses can choose the right strategy to maximize the value of their AI investments.