The rapid advancement of Artificial Intelligence has dramatically changed how modern mobile applications operate, but traditional cloud-based AI architectures come with challenges such as latency, connectivity dependency, and privacy concerns. As mobile users demand real-time responsiveness, seamless interaction, and minimal resource consumption, developers are increasingly turning toward On-Device AI and TinyML to optimize performance and deliver smarter, faster, and more reliable application experiences.

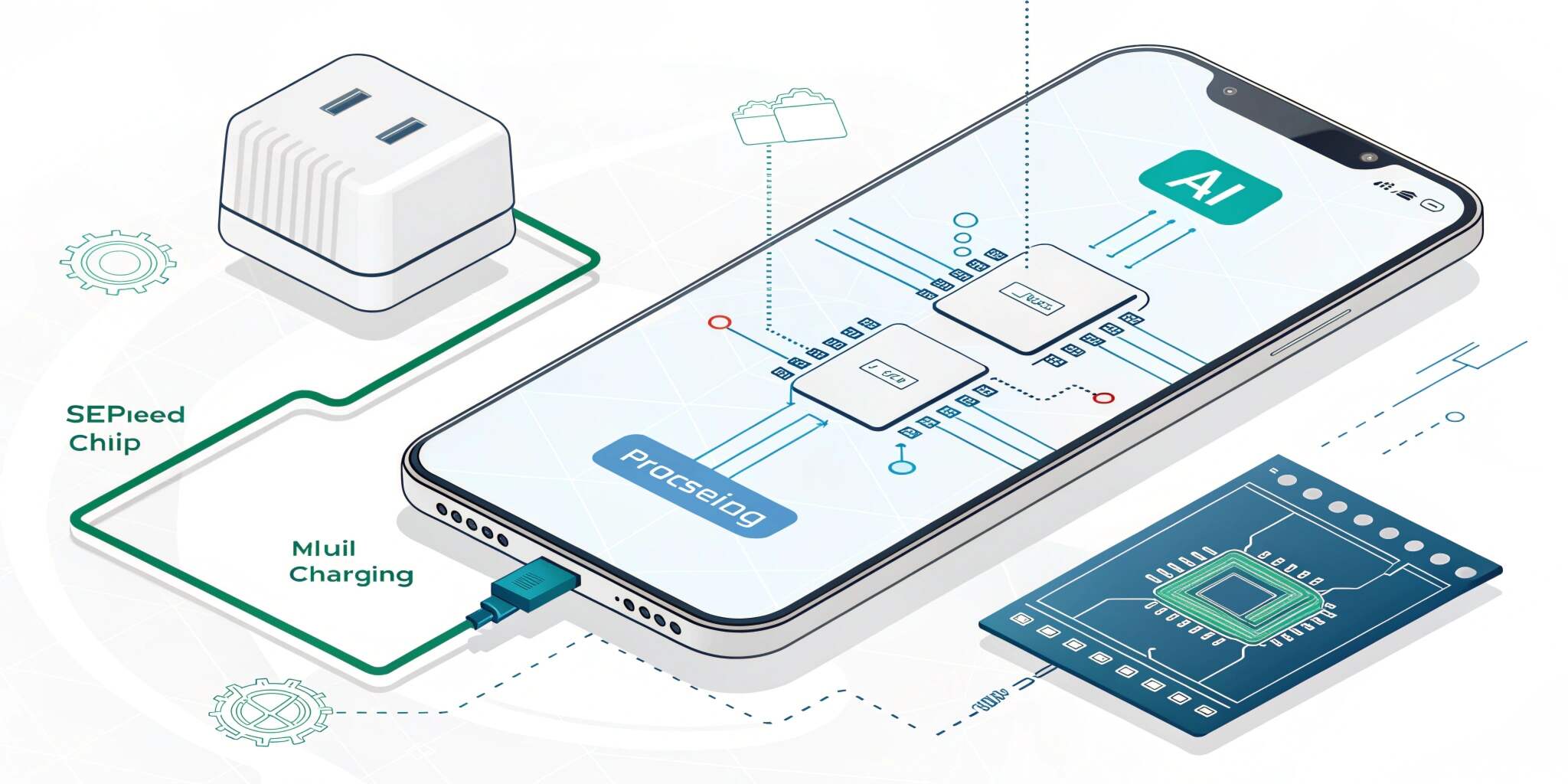

On-Device AI refers to the ability of mobile devices such as smartphones, wearables, and embedded IoT systems to run machine learning models locally rather than sending data to the cloud. Meanwhile, TinyML is a compact and highly efficient form of machine learning optimized to run on low-power hardware with limited memory and compute resources. Together, these technologies enable a new generation of intelligent applications capable of performing real-time AI tasks directly on the device—without requiring constant internet access or high compute infrastructure.

One of the greatest advantages of On-Device AI is its capability to reduce latency and improve responsiveness. For applications such as augmented reality, live voice assistants, predictive text, image recognition, and real-time translation, milliseconds can determine the quality of the user experience. Processing data locally allows results to be delivered instantly, enabling smoother performance even in low-network environments. This is particularly valuable for mission-critical industries such as autonomous systems, emergency services applications, healthcare response, and industrial mobility tools where delays can cause failures and risks.

Another significant benefit is data privacy and security. In a world where cybersecurity threats and data breaches are increasing, users expect greater control over their personal information. By keeping sensitive data directly on the device and eliminating unnecessary transfers to cloud servers, On-Device AI improves compliance with data protection standards like GDPR, HIPAA, and financial security regulations. Privacy-first AI is becoming a competitive advantage for businesses looking to build trust with users while reducing infrastructure dependency.

From a technical perspective, TinyML allows developers to run deep learning models on low-power hardware by applying optimization techniques such as quantization, pruning, and model compression. This reduces model size and computation requirements while maintaining reasonable prediction accuracy. With advances in neural architecture search techniques, lightweight models like MobileNet, SqueezeNet, and TensorFlow Lite Micro enable high-performance processing even on entry-level smartphones and small embedded boards. Combined with hardware accelerators such as Google Edge TPU, Apple Neural Engine, and Qualcomm Hexagon DSPs, TinyML provides developers with tools to enhance performance without overloading the device.

On-Device AI and TinyML are already transforming numerous real-world applications. In health & fitness apps, real-time activity recognition can process accelerometer and motion data locally, enhancing accuracy and preserving personal privacy. In mobile gaming and AR/VR, edge-based inference improves visual rendering and latency-free interaction. Security and authentication apps use on-device face or fingerprint recognition instead of sending biometric data to the cloud. Predictive maintenance and industrial apps leverage TinyML for sensor-based anomaly detection in remote field environments. Smart camera apps perform image enhancement, noise reduction, and object detection without requiring server processing.

For developers, the shift toward On-Device AI signals a major architectural evolution in the future of app development. Reducing cloud workloads lowers operational costs and dependency on external infrastructure.

Apps become more resilient and functional even offline, improving accessibility in remote regions. Additionally, as 5G and edge computing expand, hybrid cloud-edge architectures will combine local processing with distributed intelligence for unmatched scalability and speed.

However, challenges still remain. Designing and compressing lightweight AI models requires strong knowledge of hardware constraints, and balancing accuracy with performance is often difficult. Limited memory and power resources present constraints, and cross-device compatibility remains a barrier. Despite these hurdles, rapid advancements in toolchains like TensorFlow Lite, PyTorch Mobile, Core ML, and TinyML frameworks are making development faster and more accessible.

In conclusion, On-Device AI and TinyML represent a major shift in how future mobile applications will be built—moving intelligence from remote servers to the device itself. They enhance privacy, speed, efficiency, and user experience, enabling smart and autonomous apps capable of operating independently. As the demand for real-time interactions and enhanced performance continues to grow, these technologies will become fundamental to next-generation app development and digital innovation.