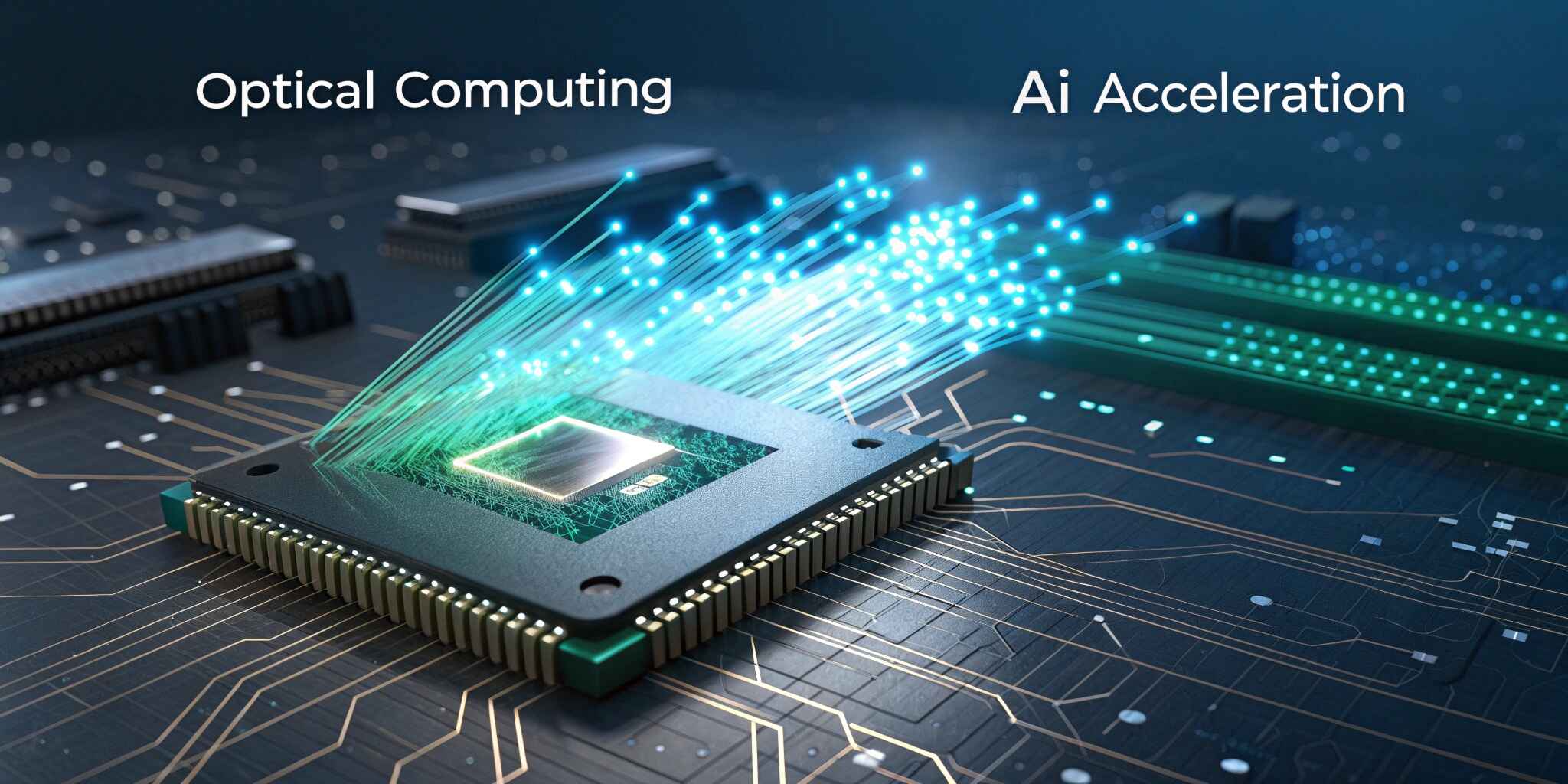

Artificial Intelligence is advancing at an incredible pace, and the demand for computational power has skyrocketed. Traditional silicon-based processors, including GPUs and TPUs, are reaching their physical and power-efficiency limits. To meet the needs of large-scale deep learning and real-time AI analytics, researchers are turning toward optical computing, a groundbreaking technology that processes data using light (photons) instead of electrical signals (electrons).

What Is Optical Computing?

Optical computing uses lasers, waveguides, lenses, and photonic circuits to perform mathematical operations at the speed of light. Unlike electronic processors, which rely on the movement of electrons through circuits, photonic processors manipulate light beams to compute data, enabling incredibly fast parallel processing without heat-related resistance.

In simpler terms, optical computing allows billions of parallel calculations to occur instantly, making it ideal for complex AI and machine-learning models that require enormous computation.

Why Optical Computing for AI Acceleration Matters

Modern AI models such as GPT, LLaMA, and multimodal generative models contain billions of parameters that require massive processing capability and energy consumption. Data centers powering AI training consume megawatts of electricity and require expensive cooling.

Optical chips solve these problems by offering:

- Ultra-high computational speed — Light travels faster than electricity.

- Low power consumption — No resistance means minimal heat generation.

- Massive parallel processing — Multiple wavelengths can carry different data simultaneously.

- Reduced latency — Ideal for real-time AI decision systems.

This positions optical computing as the next major leap beyond traditional semiconductor scaling.

How Optical Neural Networks Work

Optical neural networks (ONNs) perform matrix multiplications — the backbone of deep learning — using optical interference and light modulation. Instead of executing these multiplications digitally, ONNs use optical components like mirrors and modulators to produce instant results at the speed of light.

For example:

- Input data is encoded into light signals.

- Photonic circuits modify and combine these signals.

- Output is translated into AI model computations.

Real-World Applications

Optical AI acceleration is already being tested in multiple high-impact industries:

IndustryUse CaseAutonomous vehiclesFaster perception & collision detectionHealthcare imagingReal-time diagnostics and pathology analysisData centersHigh-speed, energy-efficient training and inferenceTelecom & 5GManaging high-bandwidth networksDefense & aerospaceAutonomous navigation and threat detection

Challenges & Current Limitations

While promising, optical computing is still emerging and faces challenges:

- Complex and expensive manufacturing

- Integration with existing digital hardware

- Limited precision compared to electronic computing

- Requires new software and programming models

However, rapid investments and research from companies like Lightmatter, Lightelligence, Intel, and IBM are accelerating commercialization.

The Future of AI Acceleration

As AI models grow larger and computing demand intensifies, optical processors may soon become standard in enterprise-scale AI training, edge computing, and autonomous robotics. Hybrid systems combining photonic + electronic architectures are expected to dominate the next decade.

The shift from electrons to photons could become as revolutionary as the move from CPUs to GPUs.

Conclusion

Optical computing is not just a futuristic concept — it is rapidly becoming a critical enabler of next-generation AI. By delivering unbeatable performance, efficiency, and scalability, photonic processors are set to redefine the speed limits of artificial intelligence and power the autonomous, intelligent systems of tomorrow.