Neuromorphic computing mimics the human brain to create faster, more energy-efficient IT systems. Explore its impact on AI, robotics, and edge computing, and how it's redefining the architecture of tomorrow’s IT infrastructure.

Main Content:

Introduction

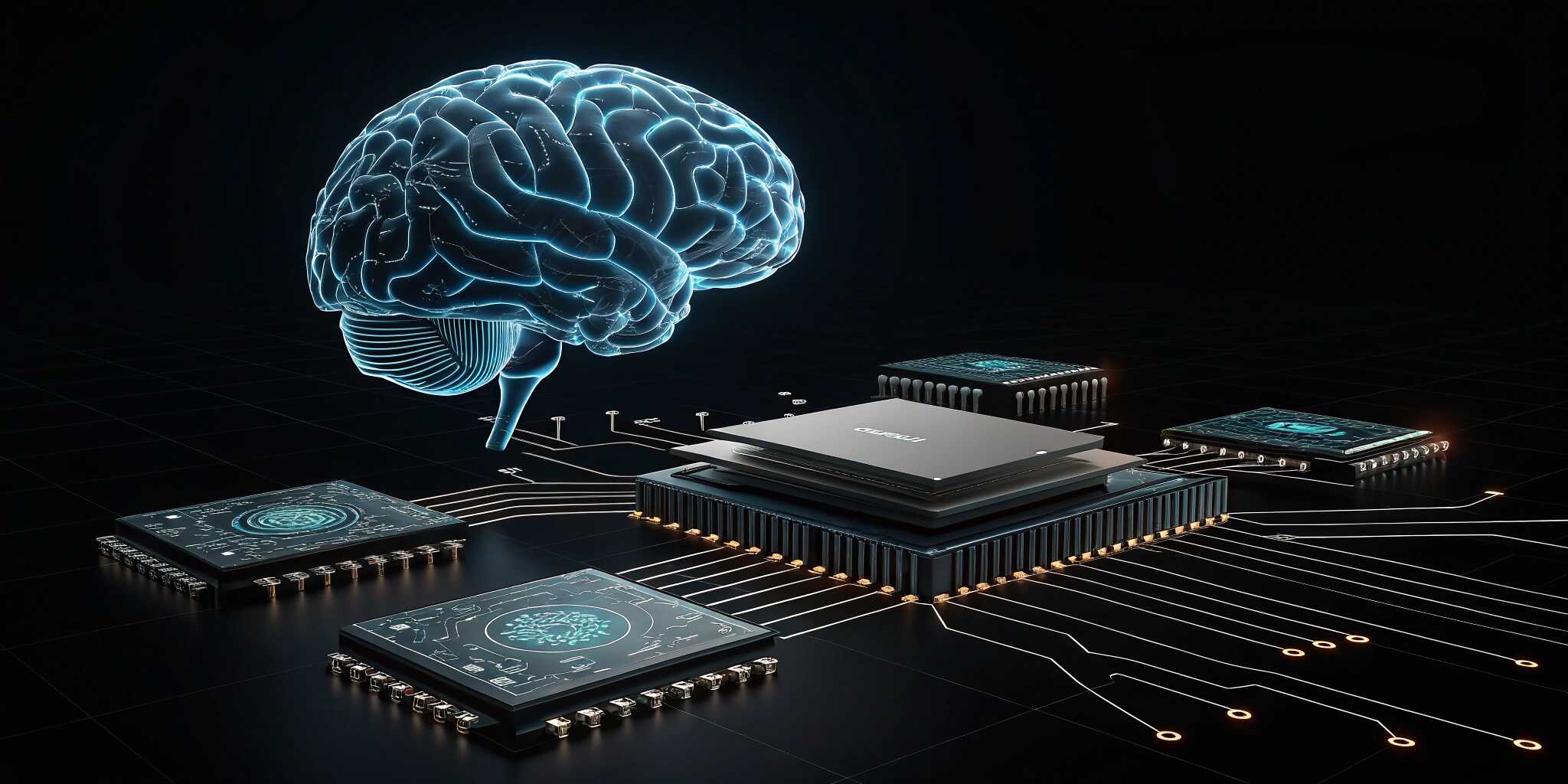

As artificial intelligence (AI) and edge technologies grow more advanced, so does the demand for computing systems that are faster, more adaptive, and energy-efficient. One revolutionary approach gaining attention is neuromorphic computing—a brain-inspired technology that may redefine the architecture of future IT systems.

By mimicking the structure and function of the human brain, neuromorphic systems promise unprecedented improvements in power consumption, learning efficiency, and real-time data processing.

What Is Neuromorphic Computing?

Neuromorphic computing is a non-traditional computing architecture that uses artificial neurons and synapses to process information similarly to biological brains. Unlike classical computing—which processes information in a linear, step-by-step manner—neuromorphic systems can:

- Process multiple data streams in parallel

- Adapt and learn in real-time

- Consume significantly less energy

- Handle noisy or incomplete data effectively

Neuromorphic chips such as IBM’s TrueNorth and Intel’s Loihi are early examples demonstrating this innovative approach.

Why It Matters in Future IT Systems

As IT systems scale and grow more complex, neuromorphic computing addresses several key challenges:

- Energy Efficiency: Neuromorphic processors require far less energy, making them ideal for mobile devices, wearables, and IoT.

- Real-Time Learning: They can adapt on-the-fly, useful for dynamic environments such as robotics or autonomous systems.

- Scalability: Neuromorphic architectures support high parallelism, enabling more scalable computing infrastructures.

- Edge AI: They enable intelligent processing at the edge, minimizing latency and reducing dependence on cloud computing.

Applications and Use Cases

- Autonomous Vehicles: Neuromorphic chips can interpret sensory data (vision, sound, motion) in real time, enhancing decision-making and safety.

- Healthcare Devices: Implantable devices and wearables benefit from low-power, adaptive processing for real-time diagnostics and monitoring.

- Smart Robotics: Robots powered by neuromorphic systems exhibit more human-like perception and behavior.

- Cybersecurity: Their ability to detect patterns and anomalies in real time can enhance threat detection systems.

- AI at the Edge: In remote or bandwidth-constrained environments, neuromorphic computing enables responsive AI without relying on the cloud.

Neuromorphic vs Traditional AI Hardware

FeatureTraditional AI HardwareNeuromorphic ComputingArchitectureVon NeumannBrain-inspiredProcessingSequentialParallel and event-basedEnergy EfficiencyHigh energy consumptionUltra-low power usageLearning CapabilityOffline trainingOn-chip, real-time learningScalabilityLimited by memory bottlenecksNaturally scalable

Challenges Ahead

Despite its promise, neuromorphic computing is still in its early stages. Some of the hurdles include:

- Lack of Standardization: There's no unified programming model or hardware framework.

- Developer Expertise: Specialized knowledge is needed to design and deploy neuromorphic systems.

- Integration with Legacy Systems: Adapting traditional IT infrastructure for neuromorphic compatibility remains a challenge.

However, as research and funding grow, these gaps are steadily closing.

The Future Outlook

Neuromorphic computing may not replace conventional computing, but it is poised to augment and enhance existing systems in areas where intelligence, efficiency, and adaptability are paramount. The synergy between neuromorphic chips, AI models, and edge devices could lead to IT systems that not only think but also learn and evolve—just like the human brain.

Enterprises that adopt early neuromorphic solutions could gain a significant edge in innovation, performance, and sustainability.

Conclusion

Neuromorphic computing is more than a futuristic concept—it’s an emerging pillar of intelligent IT systems. By imitating the brain’s structure and functions, this technology offers transformative possibilities for real-time AI, low-power computing, and scalable IT infrastructure. As IT leaders look to the future, embracing brain-inspired hardware might just be the next big leap in digital evolution.